Is AgentCore the New Lambda?

An investigation of how AgentCore works and whether it could become the new Lambda.

Context

The last AWS Community Day Spain 2025 Zaragoza took place on Saturday, November 15, 2025. The keynote “Now Go Unbuild” was delivered by Álvaro Hernández Tortosa. who introduced five “out-of-the-box” challenges regarding AWS services. One specific challenge stood out: “Is AgentCore the new Lambda?”

Hands On

I accepted this challenge. In this repository, I test the feasibility of running AgentCore as a next-generation version of Lambda. Testing the boundaries of what works—and what doesn’t—is, in my opinion, the best way to learn.

The project evolved in two phases:

-

Phase 1: Proof of concept using the AgentCore CLI toolkit (agentcore deploy).

-

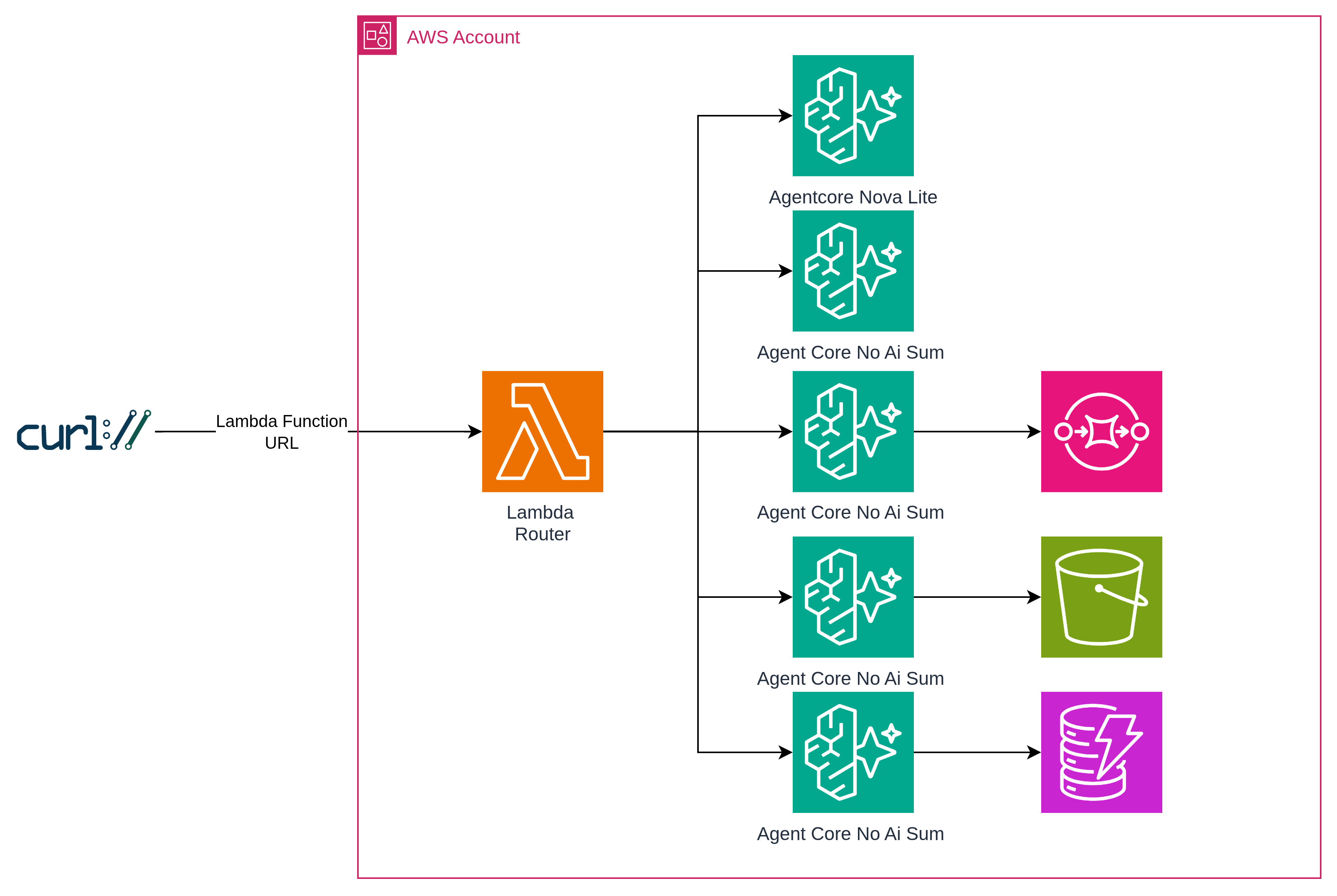

Phase 2: Migration to Terraform for full Infrastructure-as-Code (IaC) control, removing CLI dependencies, and implementing a single Lambda Function URL that routes to all five experiments.

What is AgentCore?

Amazon Bedrock AgentCore is an AWS service designed as a foundation for building, deploying, and operating AI agents at scale. It offers a serverless-style infrastructure for agent workloads, including:

-

Serverless runtime

-

Memory management for short and long-term context

-

Tool integration via AgentCore Gateway

-

Secure authentication

Architecture of the Experiment

Terraform Module

Each agent is deployed using a reusable Terraform module (modules/agentcore-runtime/) that encapsulates all the infrastructure needed for a single AgentCore runtime. This module creates:

- ECR Repository: Stores the Docker image for the agent container

- IAM Role: Execution role with ECR pull permissions and optional extra policies (SQS, S3, DynamoDB)

- AgentCore Memory: Persistent memory resource for session context

- AgentCore Runtime: The actual runtime that executes the agent container

The module accepts parameters like agent_name, extra_policy_arns, and environment_variables, making it easy to deploy new agents by simply defining a new module block with different configurations. Docker images are built locally and pushed to ECR, then referenced by the runtime via the image URI.

This modular approach allows each step (step0 through step4) to share the same infrastructure pattern while varying only the agent code and AWS service integrations.

Experiments

Step 0: AgentCore with AI Model (Nova Lite) The base line!

Agent: terraform/agents/step0_nova/

AgentCore with Amazon Nova Lite model via the Bedrock Converse API. Proves the runtime works with AI inference.

curl -X POST <lambda-url>/step0 \

-H 'Content-Type: application/json' \

-d '{"prompt": "Hello!"}'

# → {"result": "Hello", "model": "us.amazon.nova-lite-v1:0"}

Note: Step 0 uses a cross-region inference profile (

us.amazon.nova-lite-v1:0). The IAM policy requiresarn:aws:bedrock:*:*:inference-profile/*to allow routing across regions.

Step 1: AgentCore without AI Model (Pure Compute)

Agent: terraform/agents/step1_no_ai/

AgentCore as a pure compute service — no AI model, just math. This is where it starts looking like Lambda.

curl -X POST <lambda-url>/step1 \

-H 'Content-Type: application/json' \

-d '{"prompt": {"a": 5, "b": 3}}'

# → {"result": 8}

Step 2: AgentCore + SQS

Agent: terraform/agents/step2_sqs/

Replicates the classic Lambda + SQS pattern. Calculates the sum and sends the result to an SQS queue.

curl -X POST <lambda-url>/step2 \

-H 'Content-Type: application/json' \

-d '{"prompt": {"a": 5, "b": 3}}'

# → {"result": 8, "message_sent": true, "message_id": "..."}

Step 3: AgentCore + S3

Agent: terraform/agents/step3_s3/

Stores calculation results in S3 with date-based partitioning.

curl -X POST <lambda-url>/step3 \

-H 'Content-Type: application/json' \

-d '{"prompt": {"a": 1, "b": 2}}'

# → {"result": 3, "s3_stored": true, "s3_key": "agentcore-results/2025/11/18/uuid.json"}

Step 4: AgentCore + DynamoDB

Agent: terraform/agents/step4_dynamodb/

Stores structured data in DynamoDB with automatic TTL (30-day cleanup).

curl -X POST <lambda-url>/step4 \

-H 'Content-Type: application/json' \

-d '{"prompt": {"a": 7, "b": 2}}'

# → {"result": 9, "dynamodb_stored": true, "item_id": "uuid", "timestamp": "..."}

Response Format

Every response includes a timing object with per-phase metrics in milliseconds:

{

"success": true,

"step": "step1",

"data": {

"result": 8

},

"timing": {

"parse_ms": 0.3,

"invoke_ms": 245.1,

"stream_ms": 12.4,

"total_ms": 258.2

},

"request_id": "..."

}

| Metric | Description |

|---|---|

parse_ms | Request parsing and validation |

invoke_ms | AgentCore invoke_agent_runtime call |

stream_ms | Reading the streaming response |

total_ms | End-to-end Lambda execution |

Benchmark Results

Benchmark run with 10 iterations per step, 3 modes.

1. Lambda Router + AgentCore

Path: curl -> Lambda Function URL -> Lambda Router -> AgentCore Runtime

| Step | avg invoke_ms | avg total_ms | avg curl_ms |

|---|---|---|---|

| step0 (Nova Lite) | 1016 | 1016 | 1842 |

| step1 (pure compute) | 596 | 596 | 1360 |

| step2 (SQS) | 655 | 655 | 1437 |

| step3 (S3) | 781 | 782 | 1580 |

| step4 (DynamoDB) | 694 | 695 | 1495 |

2. Direct AgentCore (no Lambda)

Path: boto3 (local machine) -> AgentCore Runtime

| Step | avg invoke_ms | avg total_ms |

|---|---|---|

| step0 (Nova Lite) | 1602 | 1603 |

| step1 (pure compute) | 1200 | 1200 |

| step2 (SQS) | 1227 | 1228 |

| step3 (S3) | 1261 | 1261 |

| step4 (DynamoDB) | 1190 | 1190 |

3. Lambda-native (no AgentCore)

Path: curl -> Lambda Function URL -> Lambda Router -> inline code

| Step | avg exec_ms | avg total_ms | avg curl_ms |

|---|---|---|---|

| step0 (Nova Lite) | 360 | 360 | 1123 |

| step1 (pure compute) | ~0 | ~0 | 753 |

| step2 (SQS) | 39 | 39 | 789 |

| step3 (S3) | 71 | 71 | 835 |

| step4 (DynamoDB) | 50 | 50 | 843 |

Key observations:

- AgentCore adds ~600-700ms of overhead per invocation compared to Lambda. This is the cost of the containerized runtime (image pull, container startup, HTTP routing), but this is not a surprise — it is the expected behavior

- Lambda-native pure compute (step1) executes in <1ms. The same operation in AgentCore takes ~596ms — the ~600ms difference is the AgentCore container overhead

- AWS service calls (SQS, S3, DynamoDB) are fast from both Lambda and AgentCore (~10-70ms from Lambda, ~60-180ms from AgentCore)

- The Lambda Router adds zero overhead — invocations via Lambda are actually faster than direct boto3 calls because Lambda runs inside the AWS network

- Nova Lite AI inference takes ~360ms from Lambda vs ~1016ms from AgentCore. The difference (~656ms) is the AgentCore container overhead, not the model

- For AI agent workloads where the model inference dominates (seconds), the ~600ms AgentCore overhead is negligible. For pure compute or simple integrations, Lambda is significantly faster

Conclusion: Is AgentCore the New Lambda?

Short answer: not yet, but it was an interesting exercise and you can use it depending on the workload.

AgentCore adds ~600ms of overhead per invocation compared to Lambda. For a pure sum operation, Lambda executes in <1ms while AgentCore takes ~596ms. That is the cost of the containerized runtime — image pull, container routing, HTTP serialization.

However, when the workload itself is heavy (AI model inference, complex processing), that ~600ms becomes noise. Nova Lite inference takes ~360ms from Lambda and ~1016ms from AgentCore — the model dominates, not the container.

Where AgentCore makes sense:

- AI agent workloads where inference takes seconds

- Long-running processes (up to 8 hours continuous execution vs Lambda’s 15-minute limit per invocation — Lambda Durable Execution via Step Functions can orchestrate longer workflows, but each step is still capped at 15 minutes)

- Workloads that need persistent memory across sessions

- Container-based deployments with custom dependencies

Where Lambda wins:

- Pure compute (<1ms vs ~600ms)

- Simple AWS service integrations (SQS, S3, DynamoDB)

- Cost-sensitive workloads (Lambda bills per ms, AgentCore has container overhead)

- Latency-critical applications

The most interesting finding: the Lambda Router adds zero overhead. Invocations via Lambda are actually faster than direct boto3 calls because Lambda runs inside the AWS network. This means you can use Lambda as a lightweight proxy to AgentCore without penalty.

AgentCore is not the new Lambda — it is something different. It is Lambda for AI agents: same serverless philosophy, but optimized for long-running, stateful, AI-powered workloads.

References

- Full code and deployment instructions

- AWS Bedrock AgentCore Developer Guide

- Amazon Bedrock AgentCore

- Terraform AWS Provider - AgentCore Resources

- How to Deploy an AI Agent with Amazon Bedrock AgentCore

- Runtime Invoke Agent - Bedrock AgentCore

Gracias por leer.

¡Saludos!

Oscar Cortés